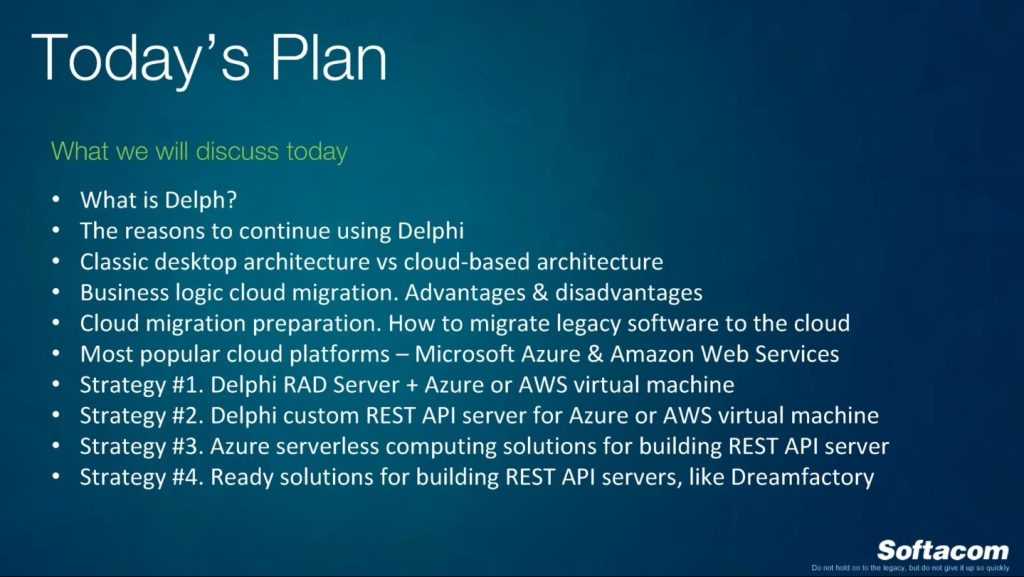

Today we will discuss migration of Delphi applications business logic to the cloud. In the beginning, I will provide my vision on what is Delphi today, its pros and cons, and the reasons why we have to continue using Delphi. Then we will compare classic architecture and cloud-based architecture, we will discuss some advantages and disadvantages. Then I will provide a couple of strategies with some technical background and strategies how we can move current Delphi applications, rebuild or reengineer them, extract our business logic and database interactions, and put all this stuff to the cloud using for example Delphi client and Delphi applications with user interface.

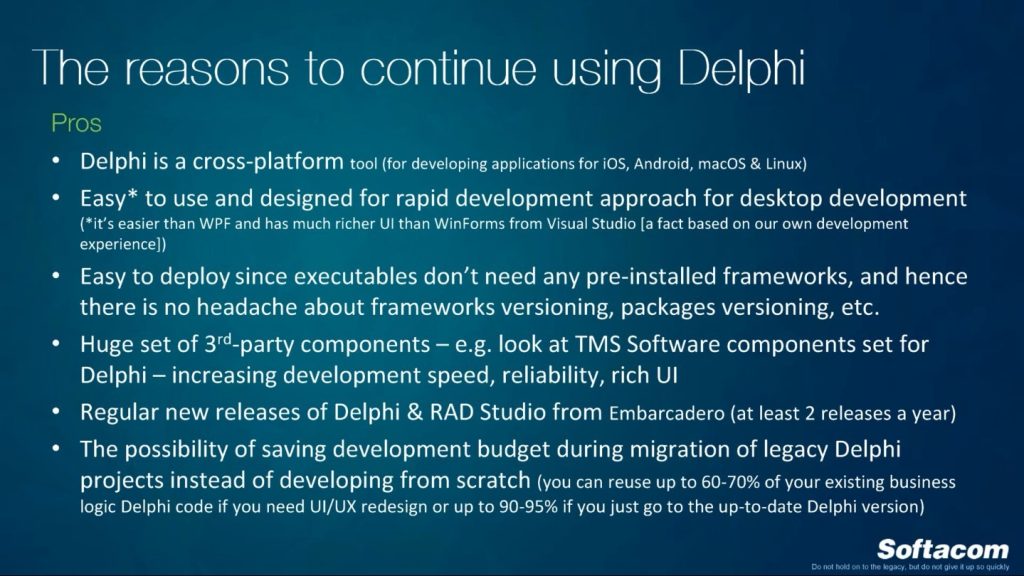

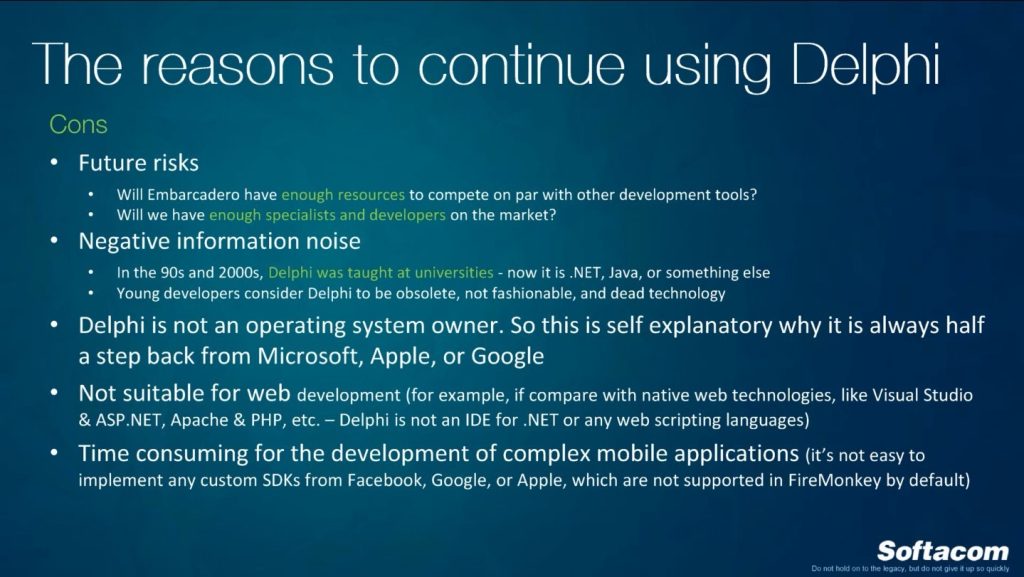

What is Delphi today? I hope you know almost everything about it but we have a tradition to say a couple of words about the history. Right now Delphi is a software development tool for building desktop, mobile applications. You know that not so long ago Embarcadero provided support for Linux, and it is not only for console applications, but also for Linux applications with UI. Right now Delphi is up-to-date, despite it was developed in 1995, and the reason is because it is being regularly updated by Embarcadero. Right now we really have a huge community with very experienced engineers and developers. Of course, in nineteenths it was a revolutionary technology for rapid application development. I used Delphi from Delphi 3, then five, seven, and so on. The interesting fact that not everybody knows is that the Chief Architect behind Delphi was Anders Hejlsberg who was later persuaded to move to Microsoft where he worked as a lead architect of C#. It is just like food for thought. After that Microsoft introduced .NET Visual Studio 2003, and I think it’s a Microsoft version of RAD studio, because before Microsoft Visual Studio 6 it was something incredible to build applications using user interface, if compared with Delphi 3 and Delphi 5 for example. Of course, Microsoft spent a lot of money into advertising and marketing of these tools and, unfortunately, the popularity of Delphi started decreasing, and eventually .NET caught the leadership.

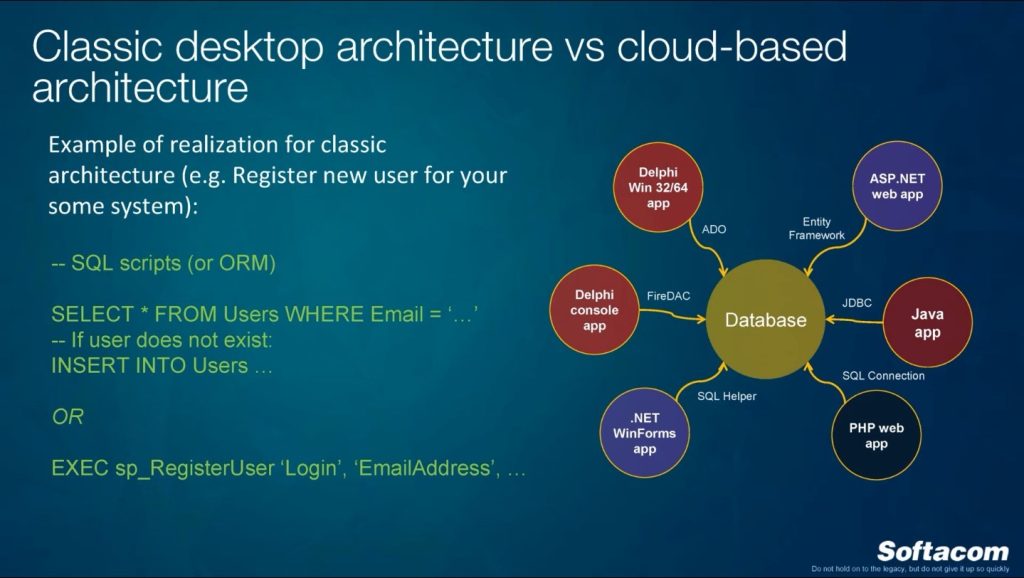

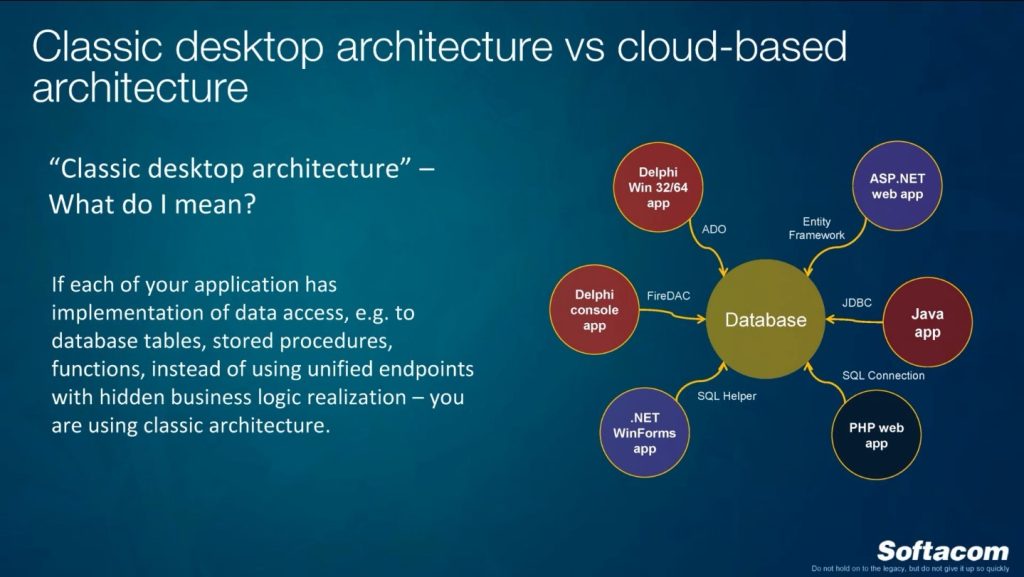

It was a common part. Now we will discuss in greater detail our webinar subject. Right now I’d like to introduce my term “classic desktop architecture”. I mean that we have a lot of Delphi software, we have some applications with data models or with classes, generic classes, but all the software connects directly to the database.

Of course, we can have some generic classes which hide some realization and are connected to specific databases like MySQL or Oracle, it doesn’t matter. And this is what classic architecture means to me. This is what I think we have to fight today and in the future. If I am talking about classic architecture, we have any database components or we have SQL queries inside our application in the source code, we can directly perform queries to the tables or we can call stored procedures, it does not matter. But we know which database we are using for different applications even if we have a complex system with many executables, sometimes some of them use ADO, some of them use FireDAC or other providers. Classic architecture, it also has its own pros and cons.

What are the cons of this classic desktop architecture? The first is versioning. If you change something in your database, especially in a common table, stored procedure, you’ll have to update all your clients somehow, or create some crazy additional support, stored procedures, which will hide all these changes, and so on. Specific data access implementation. Your data layer is not abstracted. Final layer – of course. You have to connect to Ms SQL Server, or to MySQL, or MongoDB, or any other database. And the next thing is as follows: what if we want to provide access to our platform, to our data, to the 3rd-party system? Ok, we have our internal system, but I would like to provide an API to somebody, so that you can connect and work with my data. If you have only a database, unfortunately, it’s not very beautiful, and they will even know which database you are using. You have to think about permissions, and sometimes provide all these permissions, it’s not easy. We will take the SQL server event with some middle system which has 100 stored procedures table, and it’s crazy to provide current access, create user, provide permissions, roles, and provide this password to somebody.

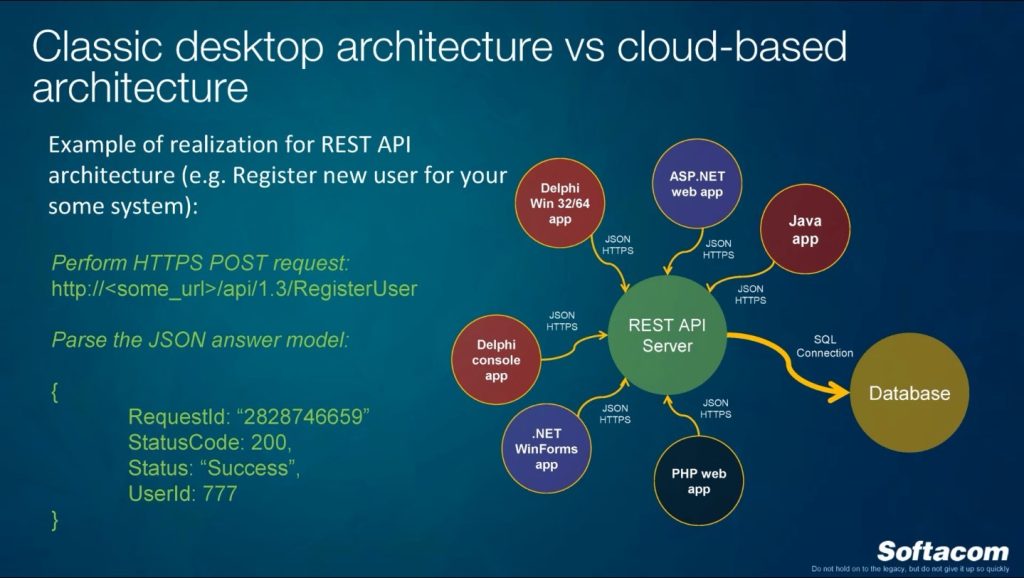

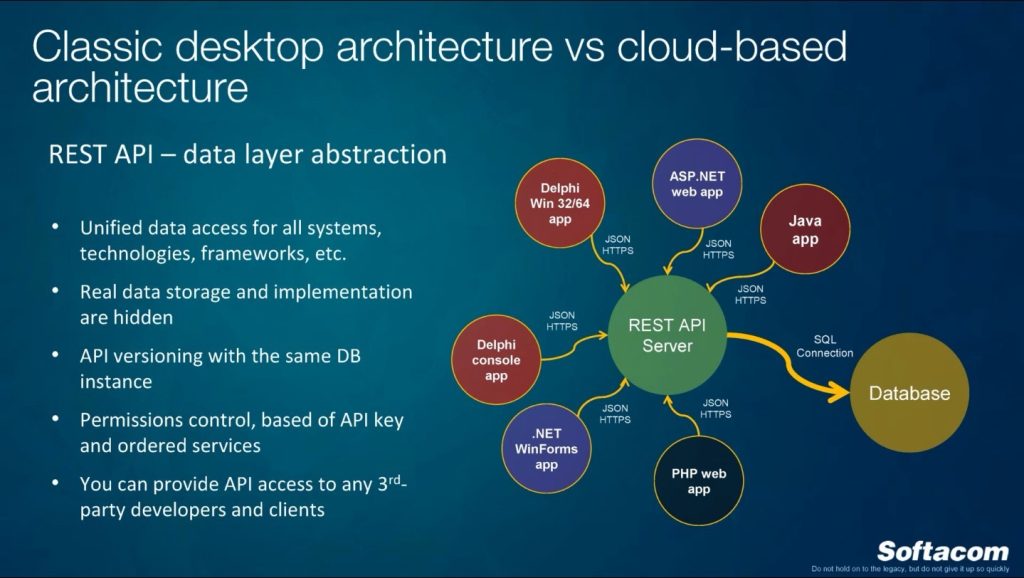

Right now I would like to introduce cloud-based architecture. What’s the difference? We have a database which is somehow wrapped with a web application which realizes REST API server. After that, all the clients will have access and will work only with this REST API server and they won’t know about exact realization of database access and we hide a lot of middle business logic which will somehow expand database functionality and provide for all these clients. Instead of data by any reference your clients will work with JSON or XML queries, when you will use HTTP POST, GET, PATCH, DELETE requests, and you will have models in the responses. Right now there are a lot of tools which will provide you functionality to create classes even for Delphi and for other systems for realization and creating classes from the swagger specifications.

REST API is data layer abstraction. Again, all the clients will work using unified protocol. Which pros will it have? Unified data access for all systems, technologies, frameworks and so on. JavaScript website application will work with this REST API. It can be our Delphi application, it can be ASP.NET and other applications, it doesn’t matter. Real database implementation will be hidden, if we are talking about any platform which we provide to somebody, if we need to. You can even divide all this logic inside the team: one team will work on this REST API, provide the documentation, and other teams will work only on the clients and will communicate with this REST API and won’t think about database changes. If we use REST API, we can develop API versioning with the same database instance. It means that for the same database we can have many REST API versions which for example will keep up-to-date obsolete or legacy version of clients. But if we need new clients, new features, we will have to develop a new REST API with new version, and it will hide all these database changes, and will have middle logic for converting all these changes to the new or legacy applications. Permissions control. It can be much easier. You can provide access to your clients based on API key, you can divide access to the API by methods, by entry point, and each entry point you can divide on for example GET list, or DELETE or UPDATE, and so on. But of course for some systems you have to develop it from scratch, for some system you will have it from the box. As I already said, this is what we are developing for our clients: you can provide API access to any 3rd-party developers and clients. We are developing some system for healthcare and somebody asks: can I connect to your system? Of course! Here is API, here is documentation, here is your APK. Work, connect and so on. And we are not showing you any database, everything we have is under the hood in our system.

Cases when legacy software modernization will bring you benefits. If you use a real cloud platform you can have no redundant processing capacities to compensate for the rare peaks of workload. You can scale computing resources and do not have and maintain them in-house. You will have profitable opportunities in providing access to your software to other companies. For some options you even will be able to design, develop, and host all your APIs in the cloud without any local development. It is not about Delphi or Embarcadero, unfortunately, but I have to tell a couple of words about such possibilities. You can support multiple versions of APIs and keep legacy projects alive.

If we have advantages, I have to provide disadvantages. But all these have a huge question mark: is it really disadvantage or not. If we extract our business logic and put it to the cloud, we have to reengineer and rebuild our system. i have to create a new project which will belong to all the staff, we have to change our clients and after that we will have one server-side REST API project which we have to support, develop, and keep up-to-date. I mean that in classic architecture if we have five executables and one database, finally, we will have five executables and one database. If we send it to the cloud, we will have five executables but one more project for the REST API server. Of course, we will cut a lot of logic in our executables but we will have the 6th project. Additional step for development. Previously you could just change your database and after that refresh, improve all your six executables. Right now you will need to change your REST API server application or project, or develop a new version for this API.

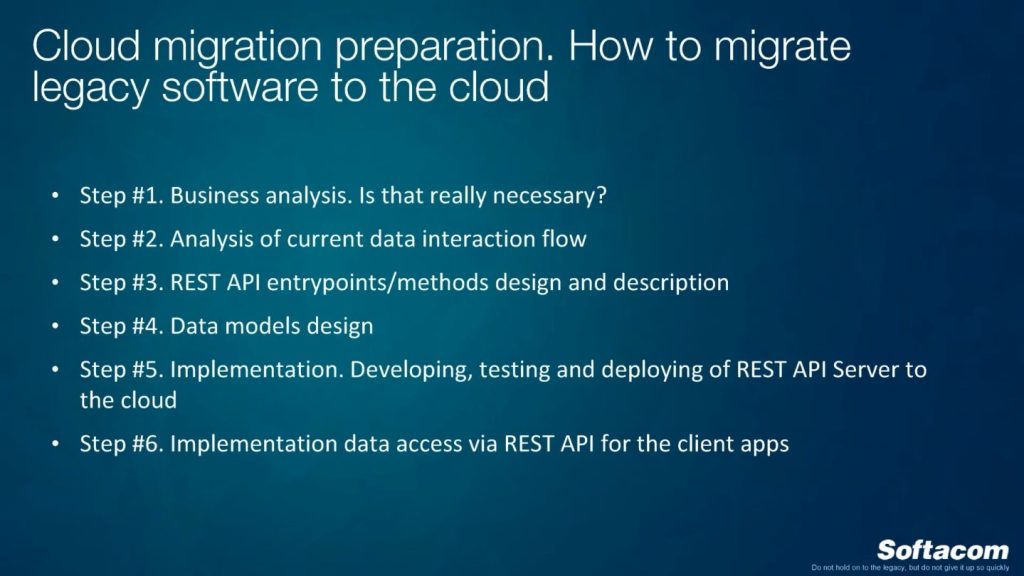

Which steps do we need to migrate legacy software to the cloud? For developers it’s clear. Business analysis is necessary. Is it really necessary to move this application business logic to the cloud? Maybe some decision makers should think and calculate some ROI, create their own formulas. The second step is the current data interaction flow. Step #3 is REST API entrypoints and methods design and description. We have to design it before the development and we can design them using some tools which I will show you later. We have to design data models. It is like input and output parameters for your entrypoints, for your REST API methods. 5th step – implementation. Developing, testing and deploying REST API Server to the cloud. And the last – implementation data access via REST API for the client applications.

Right now a couple of words about popular cloud platforms. Of course, we have Google cloud platform, but I will talk about Azure and Amazon Web Services because we worked with both systems and I’m well aware of the differences, price differences, and so on. Azure is my favorite cloud platform. It’s because it’s really easy to use, it’s a much easier and user-friendly admin console, especially if you are a Delphi developer and you are living in a Microsoft and Windows world. It’s much more beautiful from the management point of view, it’s more suitable for the Windows development world, but it’s more expensive like everything if we’re talking about Microsoft. As for Amazon, it’s more popular for UNIX and Linux technologies and it does not have so many resources like internal products for Windows technologies. Sometimes it’s more like an exception when they provide something for Windows. Unfortunately, if compared with Azure for example, the admin console is not so user-friendly for Windows, and developers from Windows world, and if you are a newbie, you can spend a lot of time on creating a simple Windows web application. It’s totally different terminology, that’s like another world. But it’s cheaper than Azure, that’s fact.

By the way, a couple of words about what is the difference between cloud platform and what I put into this term. Cloud platform is the solution which allows you to use it and don’t think any more about managing all these resources, this hardware, about security, updates. But with some nuances, of course. It has huge and beautiful capabilities for scaling. If we compare just regular shared hosting where you can develop your ASP.NET application which will realize for example REST API server with database, unfortunately, it’s not cloud, because you cannot in real time scale resources of all this shared hosting or your internal machine which hosted this REST API server. You can scale resources like memory, computing possibilities on the fly. If for example you have some marketing campaign and a lot of people go to your mobile application, you have to immediately increase computing possibilities of this machine. If you use regular shared hosting and your internal machine, you cannot do this. And in Azure or Amazon you can do it, you can increase your computing, and when all these loadings decrease, you will again go back to your 3 cores from 16 cores, and, what is very important, you will pay only for the resources which you used. Sometimes you can even connect necessary sources, then you can disconnect them, and you stop them and you won’t pay for that. Today we have possibilities to develop very flexible systems which are hosted somewhere and they are very stable. I don’t like to be responsible for the Windows machine with somebody keeping it in some room without light, and I don’t like to be responsible for internet connections, all these cyberattacks, and so on. But if we are working with Azure and Amazon, they will do all this stuff.

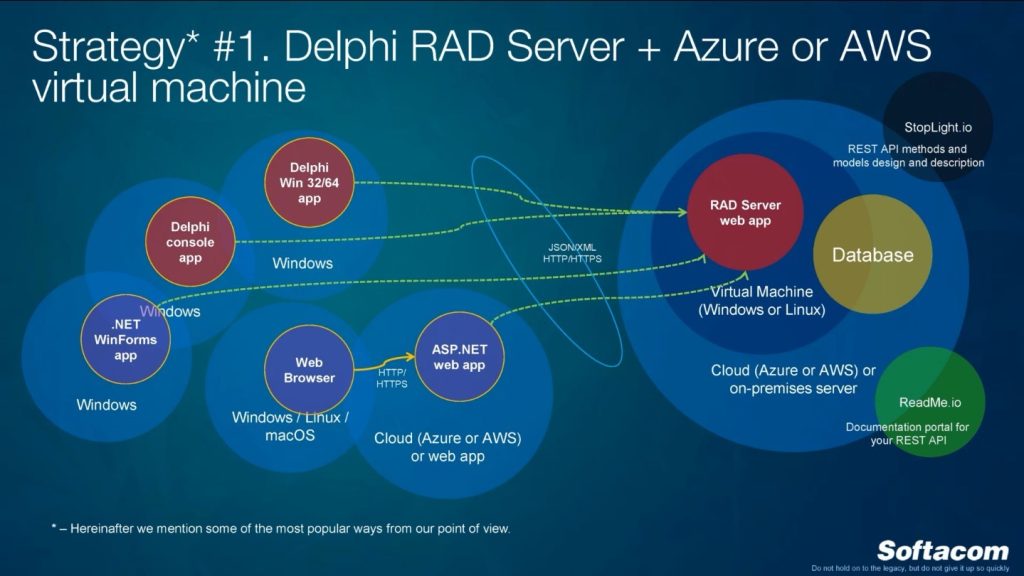

Now let’s talk about the strategies. I will provide strategies on how to move business logic to the cloud. I think the world has many more strategies because we have so many different technologies, techniques, popular, not popular, and I will provide only four strategies which we already used in our company, for our clients, for commercial projects. The first strategy is Delphi RAD Server and Azure or AWS Virtual Machine. Maybe two years ago, when Embarcadero introduced RAD server, nobody understood what it meant, because it was something like a number or set of tools. But right now RAD Server is a standalone ready-for-use solution for realization REST API for your application. It means that in the cloud it can work on Windows or Linux machine, you can create this virtual machine (unfortunately, this virtual machine is not like a slot or web application), deploy to it this RAD Server solution from Embarcadero, connect to database which can be hosted also in the cloud, and with some changes, with some additions, you will have RAD REST API Server from the scratch. Of course it has different limitations, but you can extend your functionality using Delphi, RAD Server packages, which will develop using Delphi and later deploy to this RAD Server web application. RAD Server from the box works like a database wrapper. If you have some tables, this RAD Server web application will allow you to perform POST, GET, PUT, DELETE queries to your table using REST API. Using RAD Server we will still be in Delphi world, and with this RAD Server can work any other application, not only Delphi developed, it can also be .NET WinForms applications, it doesn’t matter.

What is the best practice to design a REST API server? I want to recommend two resources and two solutions. The first one: before developing any REST API we want to design it. I’d like to create a list of methods which I will have: get a list of products, update products, and make order. I don’t like to design it somewhere in Microsoft Word or some paper, it doesn’t matter, we have such a beautiful free resource which is called stoplight.io. There you can design all your entrypoints, all your input and output models, describe field name, field size, provide description for all entry points, for all methods. And the beauty of this solution is that in the end it will generate for you some file, for example in swagger format, this is the industry standard description of your REST API. Later you can use this file for different tools for documentation. After you design your REST API you will not need to sit and create any PDFs or Word documents and from the scratch explain how to work with your API. The second resource which I want to suggest is readme.io. This web application allows you to register, import the swagger file, and in the end you will have very beautiful documentation for all your REST API which you can provide your developers, your internal team, external teams, and so on. It’s really modern, I saw a lot of different platforms or providers that I think are using this readme.io solution for providing documentation. It even has an internal REST API client for testing and so on. It was about a RAD Server solution but this RAD Server is not free and you have to think about additional license only for RAD Server, you have to go to the Embarcadero website and check the license. But, again, RAD Server not only gives access to the tables, it also has an internal authentication system and different stuff which will help you start with REST Server very fast without development, it really can save your time.

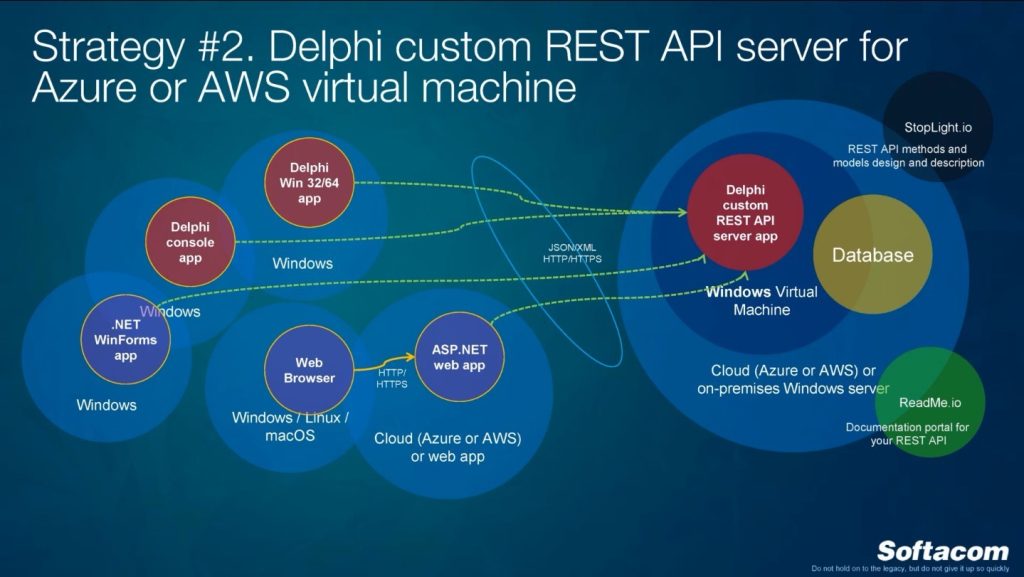

Strategy #2. I think this is what a lot of people have already tried. What’s the problem: I can have a Windows virtual machine, I can create any Delphi application which will handle HTTP requests, and I will implement this REST API Server myself. Hundred percent it can work. It can work but you have to think about this application, make sure that this application was not crushed, you have to think how to keep it up-to-date, it has to work without all these problems like some additional software have to check it and so on. You can do that using Delphi and a lot of people who are living in the Delphi world love this solution because you can still use Delphi on the client and the server side. Somebody can tell me that we can create a module which we can deploy to Internet Information Services which will be also developed with Delphi, we can create this server. Yes, but right now I’m talking about Delphi custom REST API Server executable for example. I don’t like this solution because it’s not stable.

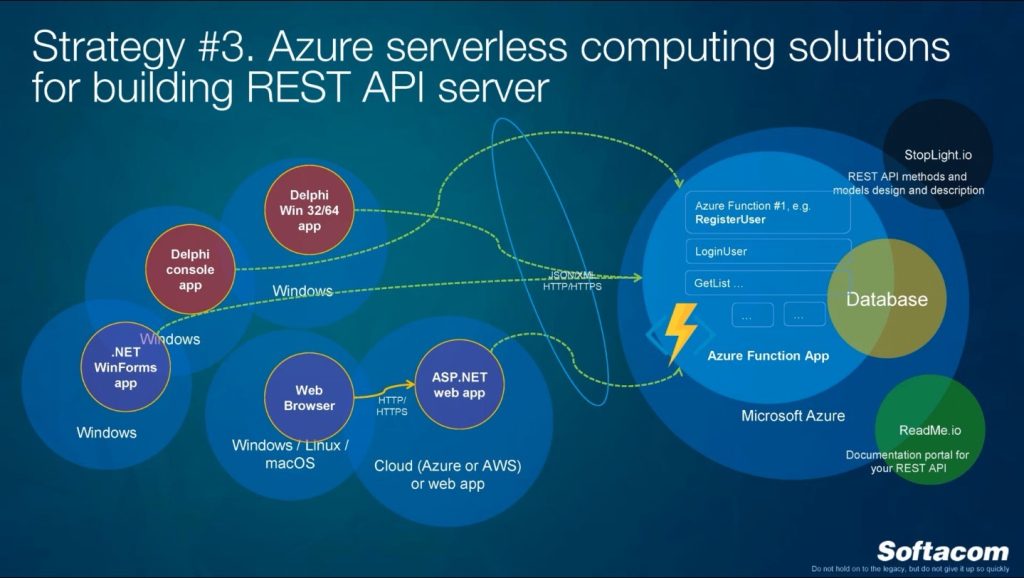

Strategy #3. Maybe somebody will kill me, but this is my favorite solution, fortunately or unfortunately. This is a modern technology which was introduced maybe half a year ago or a year ago. It’s called Azure serverless computing solutions which allow you to create your REST API Server using native Azure solution, project, programming language, unfortunately, this is not a Delphi solution. In this case, if you use this one, on the server side we won’t use any Delphi infrastructure project. Right now Azure and Microsoft are popularizing the idea to put everything to the cloud, a lot of calculations, and they introduced something called Azure function app. It’s like some slot on the cloud where you can upload your recompiled library, just a logic, which will have entrypoint, business logic for working with this entrypoint, which will have implementation for getting data from your requests, process data, work with database, and this is not a classic web application, it’s isolated from other functions. Each Azure function app can have a list of Azure functions, for example each function for each entry point for REST API Server. For now you have two possibilities. The first one: you can develop this Azure function using Visual Studio, using the classic way, and you will have the project on your machine, create the project, write the code, compile, test, deploy to the cloud. Just upload a DLL to Azure. And in the second option you won’t even need any client machine with Visual Studio, any development tools, you just can take a web browser, even from Mac, yes, we have Visual Studio for Mac also right now, you can go to Azure portal, create Azure function, write the code using for example C# language, write this code in the portal, in the browser and… you have a working server-side application. You can even test it on the cloud in the browser, perform queries, and your developer can connect to this machine from any place in the world, if something happened, fix that something, and it will work. Microsoft provides huge possibilities for scaling this Azure function speed, and their resources and possibilities for calculations, it’s a very interesting solution.

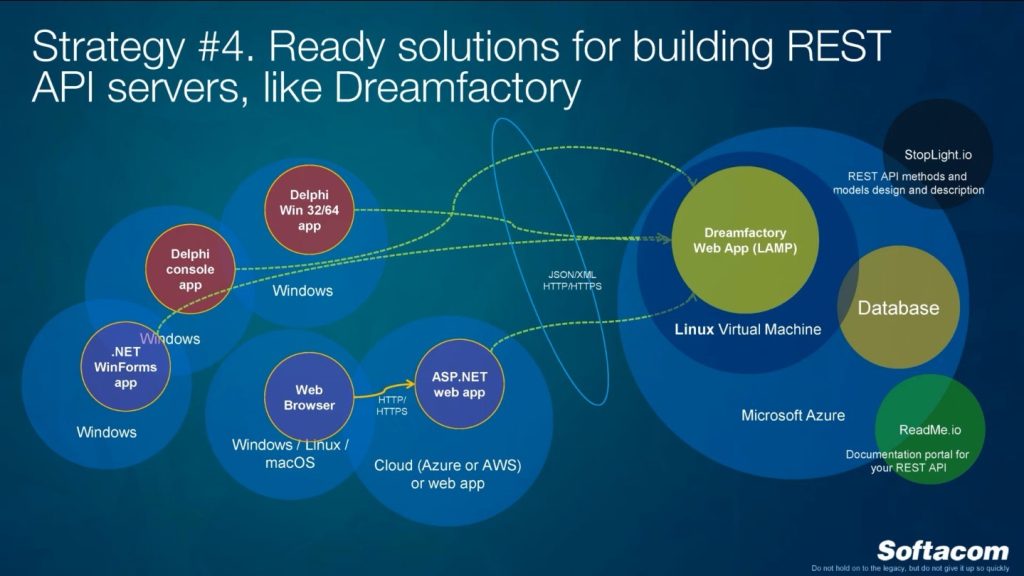

Here is the last strategy. You can take ready solutions for building REST API servers. As an example I took Dreamfactory. Somebody can ask what’s the reason to develop and do everything from the scratch, we already have everything. If I invented something, hundred people at the same time invented the same thing. Theoretically, we have RAD Server from Embarcadero, but we have other solutions. One of this solution is Dreamfactory. You can google, you will find it. This is the same web application which can be deployed to Linux virtual machine. Unfortunately, it can’t be deployed to the slot or to the web application, it should be deployed to virtual machine with operation system and it can be like a wrapper again for your database. It can even support different database types: it can work with SQL Server, MySQL, Sybase, Oracle, and it will provide you the same REST API, but of course it has different limitations, and, unfortunately, if you want to customize and add some additional middle logic between your REST API response and database, you can add this additional logic only using PHP as I remember. Maybe also using JavaScript. It is an open source and free software but for large commercial database it’s not free, it costs a lot of money, if you want to use for example SQL Server or Oracle. But if you use MySQL for example, it’s totally free. And it’s really interesting solution if you want to start. Of course, you can use RAD Server, sorry, I don’t remember does it have for example some trial version or community, I don’t think so, but you can try it and then Dreamfactory, and choose the best solution.

This is what we have already tried, what we implemented in our company. Hope that you have found some interesting ideas. See you on the next webinars!

You can contact us for consultation on software application migration. We will be glad to help you!